Edge analytics, the practice of analysing data directly on or near the industrial devices that generate it, is quietly changing how factories, utilities and logistics networks operate. Instead of funnelling torrents of sensor readings to a distant cloud, organisations run algorithms at the edge—inside gateways, PLCs or ruggedised micro‑servers—to obtain insights in milliseconds. This shift promises lower latency, reduced bandwidth costs and enhanced privacy, yet it also introduces architectural, organisational and skill‑related challenges. The following overview explores the core ideas, contemporary tools and best‑practice considerations surrounding edge analytics for Industrial Internet of Things (IIoT) deployments.

Why Edge Analytics Matters

Plant managers have long relied heavily on supervisory control and data acquisition (SCADA) systems for carrying out monitoring, but modern machines generate far finer‑grained telemetry than legacy infrastructures can tolerate. When a robotic welding arm emits vibration data at two‑kilohertz and a vision camera streams hundreds of frames per second, round‑tripping every byte to a cloud platform adds delay that could translate into scrap metal or safety incidents. By relocating feature extraction, anomaly detection and control‑loop optimisation to the factory floor, engineers minimise feedback cycles and keep production lines within tolerance bands.

Key Technological Building Blocks

- Industrial Sensors and Fieldbuses – Accelerometers, thermocouples and power‑quality meters communicate via protocols such as Modbus, EtherCAT and OPC UA.

- Edge Gateways – Fanless computers aggregate signals, convert fieldbus traffic into IP‑based streams and provide local compute for analytics models.

- Stream‑Processing Frameworks – Lightweight runtimes such as Apache Kafka Streams, Azure IoT Edge modules and TensorRT enable sliding‑window calculations and neural‑network inference.

- Secure Communication Layers – Mutual‑TLS, hardware root‑of‑trust chips and zero‑trust network policies guarantee provenance and integrity from sensor to dashboard.

- Device‑Management Platforms – Central consoles oversee fleet health, orchestrate firmware updates and roll back faulty containers with minimal downtime.

Architectural Patterns

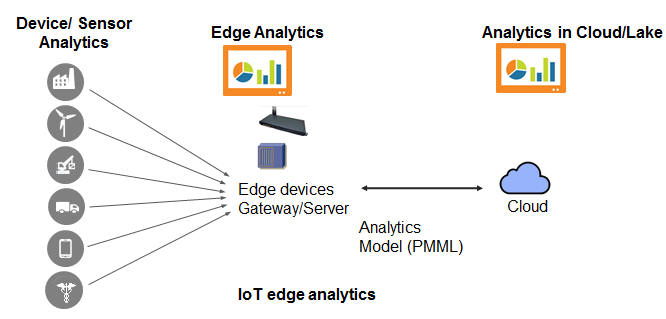

Edge architectures usually follow a tiered approach. The bottom layer comprises microcontrollers gathering raw signals. Next, gateway nodes perform feature engineering, local buffering and rules‑based alerting. A higher tier of on‑premise servers handles heavier workloads such as model retraining and batch reconciliation. Finally, filtered metrics and aggregated summaries flow to cloud lakes for historical analysis, compliance archiving and enterprise planning. This hierarchy balances real‑time responsiveness with long‑term insight.

Governance and Security Considerations

Unlike office IT, industrial environments place safety and uptime above all else. Any analytics component must therefore undergo rigorous validation before deployment. Role‑based access ensures maintenance contractors see only the devices they service, while encryption keys rotate automatically according to policy. Device attestation confirms firmware signatures, guarding against tampered binaries. Continuous monitoring highlights deviations from expected traffic patterns, prompting incident‑response playbooks.

Operational Challenges

Edge nodes often run in harsh conditions—vibration, dust or extreme temperature—and possess constrained resources. Engineers compress models through quantisation and pruning so that inference fits comfortably within memory budgets. Version management of analytics code also grows tricky: rolling upgrades must avoid desynchronising sensor schemas or control‑loop parameters. Automated canary strategies release new logic to a small subset of devices before system‑wide rollout, ensuring stability.

Monitoring, Observability and Feedback

A successful edge deployment does not stop at inference. Telemetry on model‑latency, CPU load and prediction confidence feeds central dashboards, enabling continuous optimisation. Alert thresholds adapt dynamically, reducing unnecessary alarms while guaranteeing rapid notification of anomalies. Closed‑loop feedback routes asynchronous cloud insights—perhaps a refined threshold learned overnight—back to gateway nodes, enhancing performance without manual intervention.

Skills and Training Pathways

Edge analytics straddles operational technology and data science, demanding expertise in signal processing, embedded systems and machine‑learning deployment. Many practitioners solidify these overlapping disciplines by pursuing an industry‑aligned data analyst course that introduces accelerated inference libraries, time‑series visualisation and MLOps practices specific to ruggedised hardware.

Tooling Ecosystem

Open‑source libraries remain central to innovation. Frameworks like EdgeX Foundry offer vendor‑neutral device abstractions, while Kubernetes distributions such as K3s bring container orchestration to gateway hardware. For machine‑learning inference, ONNX Runtime and TensorFlow Lite provide optimised binaries that support quantised integer operations, preserving accuracy with a fraction of the computational footprint. Configuration‑as‑code templates document desired gateway states, promoting repeatable deployments across facilities.

Emerging Trends

- AI at the Microcontroller – TinyML models tailored for 32‑bit MCUs eliminate the need for separate gateways in ultralight deployments.

- 5G Private Networks – Factory‑wide radio‑access networks guarantee predictable latency for robotics and allow roaming edge devices to maintain deterministic response times.

- Digital Twin Integration – Real‑time sensor feeds synchronise with physics‑based simulations, enabling predictive what‑if scenarios without halting production lines.

- Federated Learning for Industrial Data – Gateways collaborate to improve shared models without exposing raw telemetry, preserving trade secrets and complying with data‑sovereignty rules.

Cost‑Management Strategies

Although edge analytics reduces cloud‑egress fees, additional hardware and maintenance overheads arise. Smart organisations adopt tiered storage, purging high‑frequency raw data after feature extraction while retaining aggregate snapshots. Containers are scheduled to sleep during production pauses, saving energy. Hardware selection balances headroom for future updates against upfront capital expenditure.

Regulatory Landscape

Standards bodies have begun defining frameworks for secure edge analytics. Guidelines emphasise software bill‑of‑materials (SBOM) transparency, vulnerability disclosure timelines and continuous patching processes. Compliance audits increasingly require proof of encryption, role‑segregation and incident‑response readiness. By embedding policy‑as‑code into deployment pipelines, teams demonstrate adherence without burdensome manual checklists.

Organisational Culture and Change Management

Edge projects thrive when operations personnel and data professionals share vocabulary and objectives. Cross‑functional “insight squads” meet regularly to align success metrics: energy savings, quality targets or time‑to‑repair improvements. Internal knowledge hubs document sensor mappings, model assumptions and rollback procedures. Leadership encourages a blameless‑post‑mortem ethos, focusing on systemic remediation rather than individual fault.

Professional Growth within the Ecosystem

Regional centres of excellence have started tailoring curricula to the nuances of industrial analytics. A hands‑on data analyst course in Pune immerses learners in simulated factory environments, requiring them to containerise DSP filters, deploy predictive models on low‑power ARM boards and set up over‑the‑air update channels. Such experiential exercises accelerate readiness for field roles, bridging the gap between theoretical understanding and shop‑floor realities.

Measuring Success

Well‑chosen key performance indicators (KPIs) guide continuous improvement. Latency reports validate that edge inference responds within millisecond budgets. Bandwidth charts confirm reductions in upstream traffic. Prediction‑confidence histograms reveal model drift over seasonal cycles, triggering retraining schedules before accuracy degrades. Maintenance planners track mean‑time‑between‑failure intervals, while sustainability officers monitor kilowatt‑hours per unit of output.

Looking Ahead

Edge analytics will continue to mature alongside advances in chip design, networking and deployment tooling. Hardware vendors are embedding neural accelerators directly into sensor packages, enabling first‑hop intelligence. Cloud providers are simplifying hybrid deployments so that model artefacts published in a registry automatically propagate to authorised gateways. Meanwhile, privacy‑enhancing technologies will safeguard intellectual property, allowing co‑operation between supply‑chain partners without compromising commercial secrets.

Conclusion

Industrial IoT generates more data than traditional architectures can feasibly transport or process centrally. Edge analytics answers this challenge by embedding intelligence where it is needed most—alongside the machines that keep our factories, power plants and transport systems running. To navigate the technical and organisational complexities, practitioners benefit from formal learning. A comprehensive data analytics course supplies foundational knowledge of distributed inference, secure communication and performance tuning.

For those seeking region‑specific exposure and hands‑on immersion, the practice‑oriented data analysis course in Pune contextualises these skills within real industrial scenarios. As adoption grows, mastery of edge analytics will underpin safer operations, leaner processes and more sustainable industry, marking a pivotal step in the digital transformation of the physical world.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com